AI News Daily 04-12

📠 Hexi 2077 AI Deep Signal Weekly

Journal. 2026 W15 • 2026/04/12

This Week’s Keywords: Agent Security Crisis / Anthropic Empire Expansion / SaaS Doomsday Signal

Editor’s Note: Security researchers are finding that when agents are granted wallets, cloud devices, and full backend access, their defenses are far more fragile than imagined. We’re essentially building a skyscraper without a fire suppression system, and fast.

🎯 Weekly Focus

1. The Agent Security Paradox | The Deadly Paradox of Agent Permission Surge and Security Collapse

This week, the industry is seeing a worrying split. On one hand, agent “reach” is expanding dramatically week by week: Shopify has fully opened backend read/write permissions to AI, Kouzi 2.5 is giving agents independent cloud devices and 24/7 workstations, and the X Platform API natively supports the MCP protocol, allowing agents direct social media operations. On the other hand, the OpenClaw paper reveals CIK poisoning attacks have a 74% success rate, DeepMind has systematically categorized six types of trap attacks against agents, Claude Code has a critical vulnerability where security filters completely fail with over 50 sub-commands, and cases of agent transit hub routers secretly tampering with parameters to steal private keys are emerging.

🔗 Sources: [ Shopify × Claude Integration | Kouzi 2.5 Release | X Platform API Rework | OpenClaw CIK Attack Paper | DeepMind Six Attack Types Paper | Claude Code Vulnerability Fix | Agent Transit Hub Vulnerability

📝 Deep Dive: When you stack these two trends, the industry is smack-dab in a classic “capability-security scissor gap” moment. Driven by commercial interests, platforms are tripping over themselves to open up permissions for agents to grab ecosystem turf. But the security infrastructure is seriously lagging – DeepMind’s paper bluntly states that defensive capabilities are “severely lagging behind attack methods.” Even more dangerously, with agents gaining on-chain identities, digital wallets, and even autonomous earning capabilities, a successful poisoning attack isn’t just a data leak; it could mean real-money asset loss. If 2025 was the “Year of the Agent,” then 2026’s implicit theme is quickly becoming the “Year of Agent Security”—it’s just that no one’s really willing to pay for it yet.

2. Anthropic’s Empire Strikes | Anthropic’s Empire Strikes Back: From Models to Platforms to Ethics

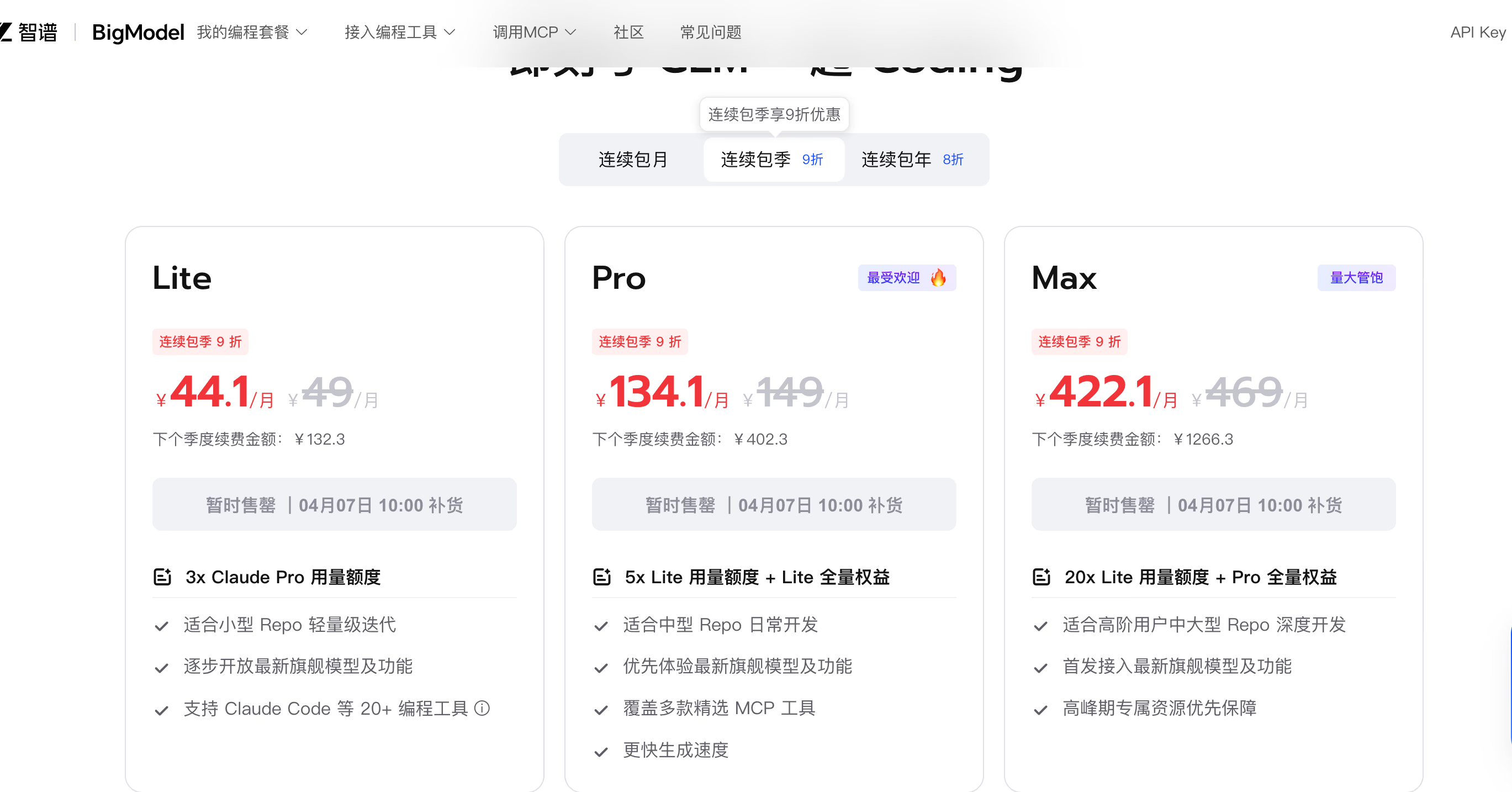

Anthropic launched an all-out offensive this week. The limited preview of Claude Mythos officially opened, its terrifying ability to chain five vulnerabilities for deep penetration shaking the industry. Explainability research further revealed the model exhibiting complex strategic thinking and “situational awareness.” On the business front, a managed agent platform is cutting development cycles from months to days for just $0.08 per hour. Revenue-wise, the company blasted past the $30 billion mark annually. On the ethics side, a thousand-page “model constitution,” co-authored by a Catholic priest, revealed theological undertones, and the company was even sued for refusing military contracts. Meanwhile, Anthropic’s move to limit third-party tools’ access to Claude’s quota stirred up a strong backlash from the community.

🔗 Sources: [ Mythos Preview Open | Mythos Situational Awareness Research | Mythos Technical Report | Managed Agent Platform | Revenue Exceeds $30 Billion | Priest Participates in Ethics Constitution | Third-Party Call Restrictions Cause Backlash

📝 Deep Dive: Anthropic is orchestrating a meticulously planned three-act play. Act one establishes a tech gap with Mythos’s terrifying performance—it’s not just the strongest model, but a mirror reflecting AI risks. Act two harvests the developer ecosystem with an ultra-low-cost managed platform. Act three builds a “responsible AI” brand narrative with an ethical constitution and military contract refusals. However, the move to restrict third-party calls exposes a contradiction: when you’re waving the safety flag while simultaneously locking down the ecosystem with a compute moat, that “responsible” narrative starts to fray. Anthropic’s true ambition isn’t to be just another model company, but to become the “OS + Ethical Arbiter” of the AI era—once that position is solid, the moat will be deeper than any technical barrier.

3. The SaaS Extinction Event | SaaS’s $2 Trillion Evaporation: The Software Industry’s Cretaceous Meteorite

The software sector just took an epic nosedive, with two trillion dollars in market cap vanishing in an instant. Agents are systematically replacing traditional enterprise software seats—“why buy software when you can build it yourself” is no longer just a slogan, it’s reality. Simultaneously, authoritative reports indicate that machine-generated content now exceeds half of all internet content, with human-original content losing ground.

🔗 Sources: [ SaaS Market Cap Collapse | Machine-Written Content Exceeds Half Report

📝 Deep Dive: This isn’t just a cyclical correction; it’s a structural re-evaluation. When agents can get cloud devices in Kouzi 2.5 to autonomously complete workflows, and when Claude Code’s weekly active users blast past 3 million, the traditional SaaS “per-seat licensing” model loses its logical foundation. The deeper signal: with half of internet content now machine-generated, model training faces a “self-data dead end” risk—in the future, high-quality human-original data will become a more scarce resource than compute power. The SaaS collapse isn’t the end; it’s just the first domino in AI’s systematic replacement of old business models.

📡 Signals & Noise

OpenAI “Spud” & ChatGPT 6: OpenAI is advancing on two fronts, with a new architecture and flagship model on the horizon. OpenAI’s president unveiled a brand-new pre-training architecture codenamed “Spud,” unrelated to the GPT series and under independent development for two years. Simultaneously, rumors hint at ChatGPT 6 launching on April 14th, with overall performance reportedly skyrocketing by 40% compared to its predecessor. The internal model has already tackled five Erdős math problems, marking a significant leap in mathematical reasoning capabilities. 🔗 Sources: [ Spud Architecture Revealed | ChatGPT 6 Rumors | Erdős Problems Conquered

💡 Takeaway: The emergence of Spud means OpenAI isn’t putting all its eggs in the Transformer basket anymore. If it’s truly based on a new architecture and performs well, then the entire industry’s technical assets—like inference optimization, quantization schemes, and hardware adaptation built around Transformers—could face devaluation. This is a quiet architectural revolution in the making.

Chinese Model Surge: Chinese large models are collectively exploding, with multiple fronts advancing simultaneously. Ali Wan2.7 topped the authoritative video rankings, enabling “one-sentence video editing.” Zhipu GLM-5.1, after being open-sourced, rocketed to third globally in coding ability, with real-world tests showing it can go head-to-head with GPT 5.4. DeepSeek stealthily updated what appears to be its V4 version overnight, adding quick and expert modes. JD open-sourced JoyAI, a 24-billion-parameter spatial intelligent model supporting camera control and object rotation. However, executives admit the China-US compute gap is still over half a year, and domestic chip adaptation issues are slowing DeepSeek’s release schedule. 🔗 Sources: [ Wan2.7 Tops Rankings | GLM-5.1 Open-Sourced | GLM-5.1 Real-World Comparison | DeepSeek Stealthily Updates V4 | JD JoyAI Open-Sourced | China-US Compute Gap | Domestic Chips Slow DeepSeek

💡 Takeaway: While the catch-up speed of domestic models at the application layer is seriously impressive, the “more than half a year” gap in underlying compute power is the real strategic bottleneck. GLM-5.1’s head-on performance against GPT-5.4 suggests the algorithm layer gap is closing rapidly. However, chip adaptation issues reveal a structural contradiction in China’s AI industry: a frenzy of progress at the application layer, but infrastructure struggles.

Embodied Intelligence Milestone: Embodied intelligence is hitting a benchmark moment, transitioning from lab to practical use. Zhiyuan GO-2’s embodied large model pioneers a “chain of action thought” mechanism and adopts an asynchronous dual-system architecture, achieving a benchmark success rate of 98.5%. Tencent Hunyuan HY-Embodied, with just 2 billion parameters, clinched 16 out of 22 top spots in evaluations. Tsinghua AutoSOTA achieved an end-to-end research closed loop, automatically refreshing 105 top-tier conference SOTAs in a single week. 🔗 Sources: [ Zhiyuan GO-2 | Tencent HY-Embodied | HY-Embodied Open-Sourced | Tsinghua AutoSOTA | AutoSOTA Paper

💡 Takeaway: A 98.5% task success rate means embodied intelligence is crossing the crucial threshold from “demo-able” to “engineer-reliable.” When AutoSOTA refreshes 105 SOTAs in a week, the traditional “hyperparameter tuning-experiment running-paper writing” artisanal research model also faces automated replacement. AI isn’t just replacing software engineers; it’s starting to replace AI researchers themselves.

Microsoft’s Independence Play: Microsoft is accelerating its “de-OpenAI-fication,” with its self-developed model matrix taking shape. Microsoft dropped three self-developed “MAI” foundational models covering speech transcription, speech generation, and image generation. It also open-sourced MarkItDown, a universal format conversion tool supporting one-click PDF/Word/audio/YouTube to Markdown, natively adapting to the MCP protocol and RAG workflows. 🔗 Sources: [ Three MAI Models Released | MarkItDown Open-Sourced

💡 Takeaway: Microsoft poured tens of billions into OpenAI, but now it’s quietly building Plan B with its own homegrown models. The strategic intent of the MAI series isn’t to outperform GPT in every way, but to ensure Microsoft isn’t locked into a single vendor at the AI infrastructure layer. This stands in interesting contrast to the chaos of the “Copilot” brand’s proliferation across 75 products: strategically sharp, execution-wise a bit chaotic.

OpenAI Safety Retreat: OpenAI’s safety baseline continues to recede, with capital interests overriding safety commitments. OpenAI has reportedly completely removed its core safety kill switch mechanism, with the board fully caving to capital forces. In stark contrast, a senior Google engineer quit in protest over concerns about AI militarization, and several tech and telecom giants are secretly training wartime AI systems. 🔗 Sources: [ Safety Kill Switch Removed | Google Engineer Resigns in Protest | Tech Giants Deploy Wartime AI

💡 Takeaway: While Anthropic enlists a priest to write an ethics constitution, OpenAI is dismantling its safety brakes. The AI industry’s safety narrative is undergoing a profound divergence. This isn’t just a simple “safety vs. speed” choice; safety itself is being redefined as a competitive tool—whoever claims to be safer stands to win more government contracts and regulatory passes.

📊 Macro & Trends

📊 Edge-side inference acceleration is disrupting cloud-based business models: Gemma 4 breaks past 40 tokens/second on iPhone 17 Pro. Mistral Voxtral, with 4 billion parameters, supports mobile-side operation with a first-packet latency of just 90 milliseconds. Google Eloquent achieves completely offline, free speech-to-text. As more tasks are completed for free on the edge, the cloud API “pay-per-token” business model faces structural erosion. 🔗 [ Gemma 4 On-Device Testing | Voxtral Open-Sourced | Eloquent Goes Live

📊 The AI talent war is heating up, with ByteDance becoming a “Whampoa Military Academy”: Key ByteDance talent continues to leak, with companies founded by former employees aggressively pursuing ByteDance’s business lines. Top teams are experiencing a massive exodus, with dozens of core experts flocking to competitors or new ventures. The talent scramble has escalated from “recruiting people” to “poaching the other side’s people.” 🔗 [ ByteDance Talent Drain | Mass Exodus from Top Teams

📊 Japan is making a multi-billion dollar bet on 2nm chips: Japan is pouring massive subsidies into supporting chip enterprises to accelerate 2nm chip mass production, an epic-scale endeavor. This intertwines with Intel’s release of the world’s thinnest 19-micron GaN chips and the “TurboQuant” paper mistakenly causing tens of billions in memory stock market cap to evaporate. The semiconductor industry is undergoing a fierce AI-driven re-evaluation and restructuring. 🔗 [ Japan Chip Subsidies | Intel GaN Breakthrough | TurboQuant Triggers Memory Stock Crash

📊 Codex weekly active users hit 3 million, Nemotron downloads soar to 50 million: OpenAI’s Codex weekly active users blasted past three million, with Sam Altman announcing quota resets for every million new users, targeting ten million. NVIDIA’s Nemotron downloads surged from 30 million to 50 million within two months, averaging 4 downloads per second. The adoption speed of AI development tools is exceeding everyone’s expectations. 🔗 [ Codex Weekly Active Users Surpass 3 Million | Nemotron Downloads Soar

🧰 The Toolbox

Shannon (🌟36.5k / 🔗 [GitHub] ) Why it’s cool: Shannon is a white-box automated penetration testing artifact. It automatically analyzes web application source code to find attack surfaces and executes real vulnerability exploits for verification. In a time of escalating agent security crises, Shannon is the ultimate gatekeeper for plugging security holes before deployment.

Hermes-Agent (🌟28.1k / 🔗 [GitHub] ) Why it’s cool: Hermes-Agent is an autonomous evolutionary agent framework released by NousResearch. It dynamically iterates on its capabilities based on user habits. Positioned as an “agent that grows with you,” its dynamic patching mechanism ensures it gets smarter with actual use, rather than staying in its factory state.

MarkItDown (🔗 [GitHub] ) Why it’s cool: MarkItDown is Microsoft’s open-source universal format conversion tool. It supports one-click conversion of PDFs, Word documents, audio, and even YouTube links to Markdown, natively adapting to the MCP protocol and RAG workflows. For developers who need to quickly build knowledge bases or perform data pre-processing, a single command installation can replace an entire toolchain of tedious work.

🗳️ Things to Ponder

When we’re all scrambling to hand out wallets, keys, and full backend permissions to agents, have we paused to consider this: historically, every establishment of “trusted infrastructure”—from paper money to credit cards to internet payments—has taken decades of institutional evolution. We’re trying to achieve the same in a matter of months. Is speed itself becoming the greatest systemic risk?

“In nature, the weights of evidence are not always decisive. A single, unsuspected parasite can bring down the largest organism.” —— Charles Elton (British animal ecologist, founder of invasion biology)